Anthropic is proving a critical point: relying on the raw power of a neural network in a production environment is a dead end. A deep dive into the Claude Code architecture reveals that behind the AI facade lies rigorous engineering discipline. Instead of relying on endless prompting, the system utilizes a classic industrial pipeline featuring a coordinator, a dependency planner, and a message bus. While casual users might simply ask a model to 'build an app,' Claude Code decomposes that request into dozens of granular operations, managing queues and data flows between agents. This effectively ends the debate over 'magic buttons'—business stability is guaranteed by the architectural management layer, not the model itself.

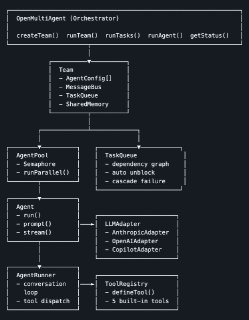

This technological shift is further evidenced by the open-multi-agent project. While the official claude-agent-sdk remains cumbersome—clinging to the habit of spinning up a heavy CLI process for every agent—alternative implementations are moving toward in-process execution. In practice, this means being serverless-ready, Docker-compatible, and capable of seamless CI/CD integration without skyrocketing infrastructure bills. The result is agentic chains that embed directly into existing business processes rather than existing as expensive, isolated sandboxes.

The key takeaway for management is clear: AI reliability has officially moved from the realm of model training to the sphere of queue management and role separation. Today, investing in the search for the 'best LLM' makes less sense than investing in your orchestration layer. It is this layer that allows you to scale automation while maintaining control and a sensible ROI.