Governments are frantically automating administrative processes in a bid for efficiency, but this shift requires a specialized 'compliance layer' to ensure that probabilistic AI outputs remain legally valid. According to a recent preprint, 'AI Governance under Political Turnover,' published on arXiv, researchers have introduced the concept of the 'Alignment Surface'—a framework designed to make algorithmic decisions verifiable and reproducible.

The irony lies in the fact that these frameworks, built to keep AI within legal bounds, establish fixed 'approval boundaries.' The authors argue that such transparency allows political successors to study the system’s limits and masterfully manipulate outcomes while maintaining a facade of legality.

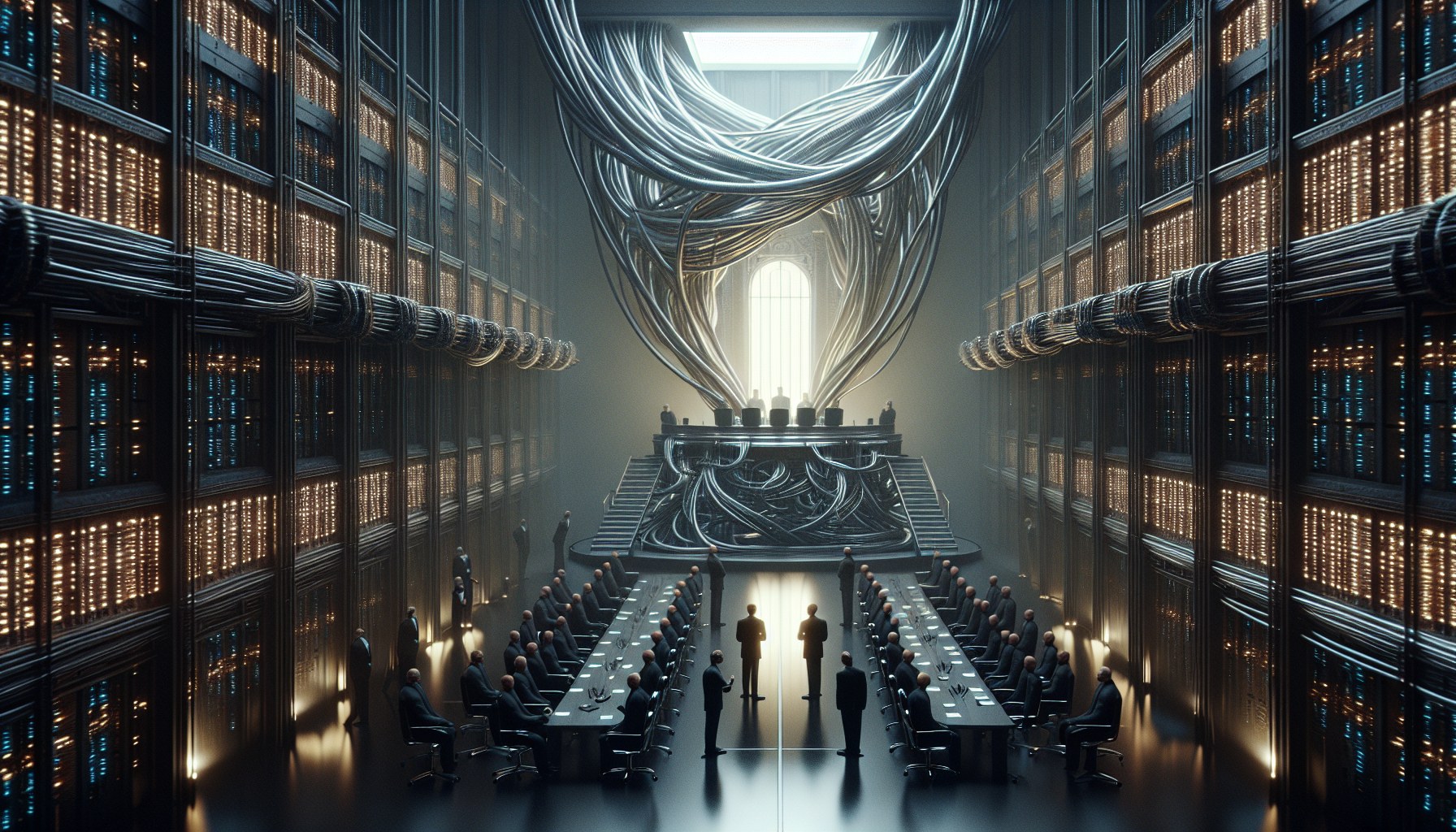

Designing systems with rigid codification and high repeatability creates a paradox: transparency becomes a vulnerability. As the report explains, the more predictable the automation, the easier it is for officials to exploit it through iterative testing and strategic calibration. Reforms originally intended as oversight tools ultimately transform into a roadmap for future administrations—a manual on how to bend the system to their will without formally violating any rules. Consequently, large-scale AI implementations become nearly impossible to dismantle as they become fused into the institutional mechanisms of power retention.

For C-suite executives and CTOs, this is a clear signal: monolithic compliance rules under volatile regulation are a direct liability. Instead of static safeguards, researchers suggest implementing adaptive governance focused on flexible approval boundaries. From our perspective, this is the only way to maintain operational resilience during shifts in political direction. Decision-makers must understand that a perfectly transparent compliance layer today is a manual for regulatory interference tomorrow.

For the private sector, the hype surrounding 'Explainable AI' (XAI) in government contracts is effectively laying the groundwork for legitimate political meddling. If you are building infrastructure for the public sector, your value no longer lies solely in the algorithm itself, but in the Alignment Surface that balances legal protection with strategic flexibility. Betting on rigid architecture now ensures that your solution will be easily weaponized by the next administration.