Inflated expectations have finally collided with the harsh reality of server math. Investors and founders continue to value AI businesses using the Software 1.0 playbook—relying on revenue multiples, CAC, and LTV as if the underlying economic structure hasn't shifted. This is a dangerous self-deception. Median multiples for AI startups are currently 25–30x revenue, five times higher than traditional SaaS benchmarks. Yet, recent McKinsey data offers a sobering reality check: only 39% of organizations see any EBIT impact from AI implementation, and most estimate that contribution at less than 5%. Strip away the PR gloss, and you’ll find a massive chasm between market capitalization and actual cash-generating capacity.

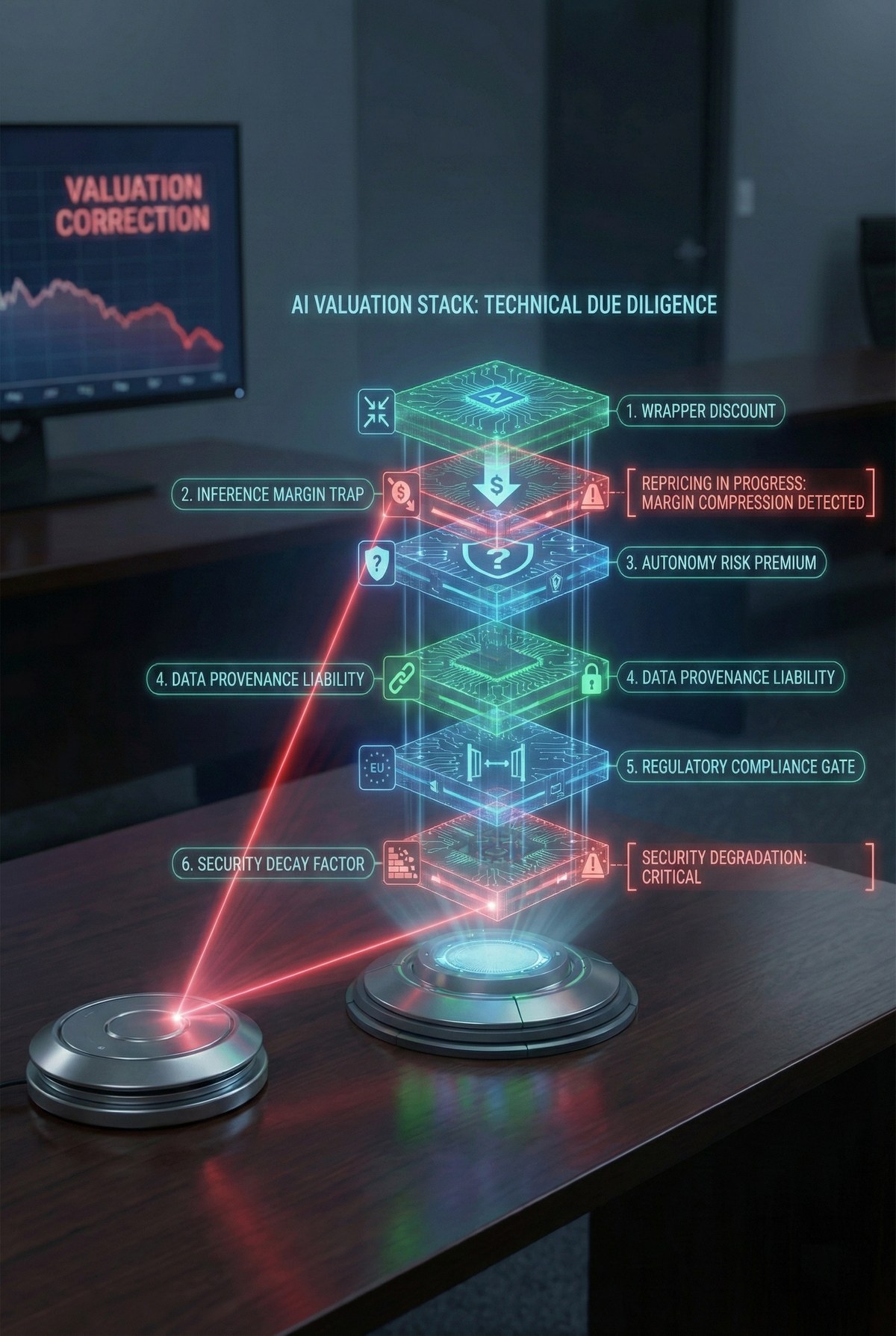

The first to fall are the "wrappers"—services that offer little more than a thin interface over third-party APIs like GPT-4. These projects lack proprietary data, custom training, or any defensible architecture. Market mechanics are unforgiving: the value of these projects is evaporating. While vertical companies with deep domain expertise trade at 9–12x ARR, wrappers are struggling to maintain even 3–4x. The moment OpenAI or Google releases a native feature that duplicates a startup’s functionality—which they will do by analyzing your own API request patterns—the company’s value drops to zero. For investors, there is now a brutal operational litmus test: ask a team to switch model providers within 30 days. If they can’t, you aren't looking at a tech business; you're looking at a house of cards built on Sam Altman’s whims.

The most dangerous trap is buried in the "Inference Margin." We have long accepted as an axiom that in software, margins expand with scale as the marginal cost of a new user nears zero. In the AI world, the opposite is true. Traditional SaaS operates on 80–90% margins, whereas AI-native projects hover around 50–60%, and this figure often declines as load increases. A BCG study confirms the trend: 60% of companies see no material gain from AI investments. The culprit is inference—every agent request burns physical GPU resources and electricity. Data for 2025 shows the share of companies shutting down AI initiatives has jumped from 17% to 42%. When 84% of enterprises report margin erosion of 6% or more due to infrastructure costs, scaling becomes little more than an efficient way to incinerate capital.

Gartner predicts that over 40% of agentic AI projects will be decommissioned by 2027. This is the logical end for those building businesses on the resale of someone else’s compute without creating a technological moat. To prove a company is unique, you must audit the stack: check for proprietary RAG pipelines, fine-tuned models on unique datasets, and architectural independence from any single provider. Without these, "AI transformation" remains an expensive bolt-on that eats EBITDA faster than it attracts customers. In an era where inference is the primary expense, the survivors won’t be those who write the best prompts, but those who control the cost of every function call.

Ask your CTO for a unit-economic report on a single successful request, accounting for GPU depreciation or API fees. If these costs exceed 20% of the transaction price, your scaling model is dead on arrival.