Researchers have released LPM 1.0, a model capable of animating a single photograph to make it speak. The primary focus is on realistic lip synchronization, facial expressions, and fluid, real-time emotions. This capability extends up to 45 minutes, a significant duration for generative AI tools. LPM 1.0 can synchronize speech from AI voice models like ChatGPT and can adopt various appearances, ranging from photorealistic faces to anime and 3D characters. The system distinguishes between three states: listening (nodding, reacting), speaking (lips moving in sync with audio), and pauses (naturalistic breaks). The technology uses details from the original photo at different angles, a process termed 'multi-granular identity conditioning,' to avoid generating features from scratch. While currently a laboratory tool with no immediate plans for user release, developers acknowledge that artifacts exist and the quality is not yet at Hollywood standards. However, the ability to generate multi-minute videos on demand represents a substantial leap beyond simple meme creation. Potential applications span virtual assistants to personalized video calls. This technology promises to radically reduce the cost and time required to create video content for marketing, training, and support. Imagine having your own avatar speaking custom messages in real-time. This advancement, while impressive, brings the persistent challenge of deepfakes and content verification to the forefront. Without robust verification methods, the risk of accepting all visual content at face value increases significantly.

© The Value Engineering 2026

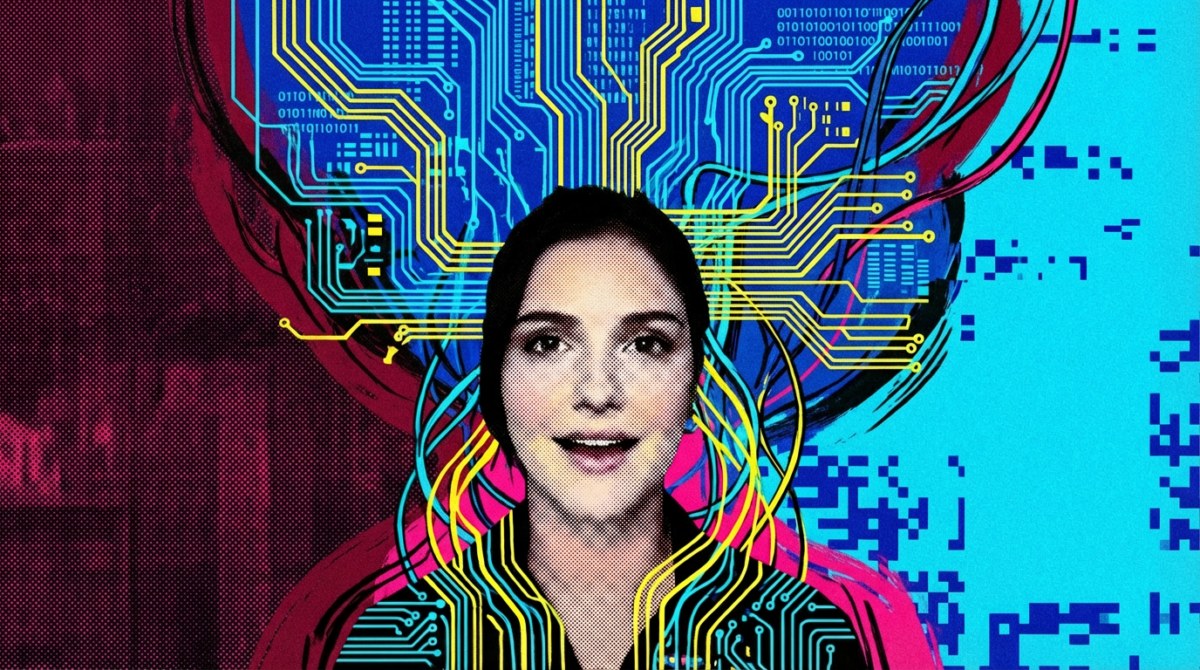

AI Lip Sync Model: New Business Threats & Opportunities

New AI model LPM 1.0 animates photos to speak realistically, posing deepfake risks but also promising reduced video content costs for business.

★

★

★

★

★