The global data center industry has hit a physical wall at full throttle. As AI workloads scale to gigawatt levels, the primary challenge is no longer chip overheating, but the sheer inertia of power grids. According to a recent report by Ampace, the critical bottleneck for digital transformation has shifted from cooling systems to the dynamic stability of power supply chains.

Modern GPU clusters generate high-frequency, sharp, and synchronized pulse loads. With power densities exceeding 100 kW per rack, these fluctuations create a technological paradox: AI's digital logic evolves at the speed of light, while the physical infrastructure responds at a snail's pace.

Data from Ampace confirms that sudden consumption spikes trigger voltage transients and destabilize grid frequency. This effectively turns a data center into a ticking time bomb for the local power grid. Ironically, traditional backup solutions like diesel generators and gas turbines are useless here—they simply cannot react to millisecond power surges. Consequently, operators are forced to inflate budgets for redundant infrastructure solely to dampen this volatility.

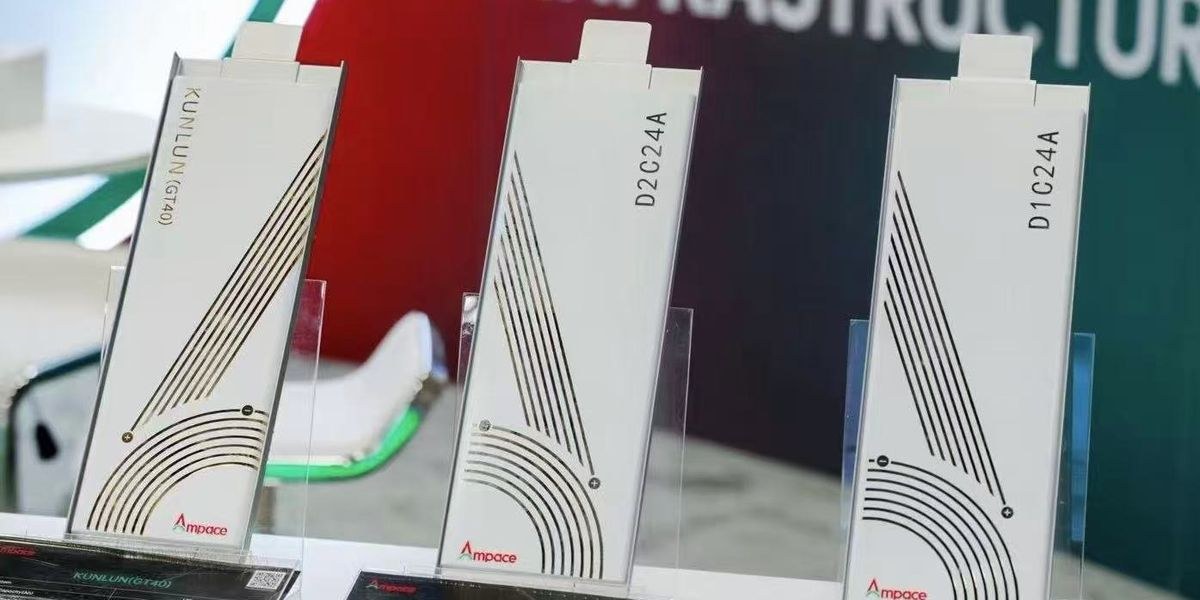

At the Data Center World 2024 conference, representatives from Ampace and Eaton stated clearly: energy storage systems must evolve from a passive 'insurance policy' into active, high-speed stabilizers. The proposed solution involves installing 'shock absorbers' at the rack level. Ampace explained that their semi-solid-state batteries, featuring low electrolyte content, are designed to neutralize pulses within the local circuit before they compromise the external grid.

Combining this battery chemistry with Eaton’s control algorithms allows for the suppression of dangerous subsynchronous oscillations. We are witnessing a curious technical regression: to prevent next-generation software from breaking the physical world, engineers must invent sophisticated 'crutches' made of specialized chemistry and intelligent UPS systems. Without a radical upgrade of this fundamental layer, further scaling of AI models will become economically unfeasible due to the prohibitive costs of system stabilization.