The pharmaceutical industry has hit a technological dead end. Up to 80% of FDA submissions still rely on SAS—a platform as reliable as it is closed. These algorithms, battle-tested over decades, have become isolated 'black boxes.' Jaime Yang of Harrisburg University notes that proprietary macro libraries, often spanning hundreds of thousands of lines, hard-code statistical logic directly into formatting. The result? Invaluable data is trapped inside RTF reports, rendering it unreadable 'noise' for modern AI agents. CTOs typically face a grim choice: embark on a high-risk rewrite of validated logic from scratch or opt for superficial updates that fail to solve the underlying data access problem.

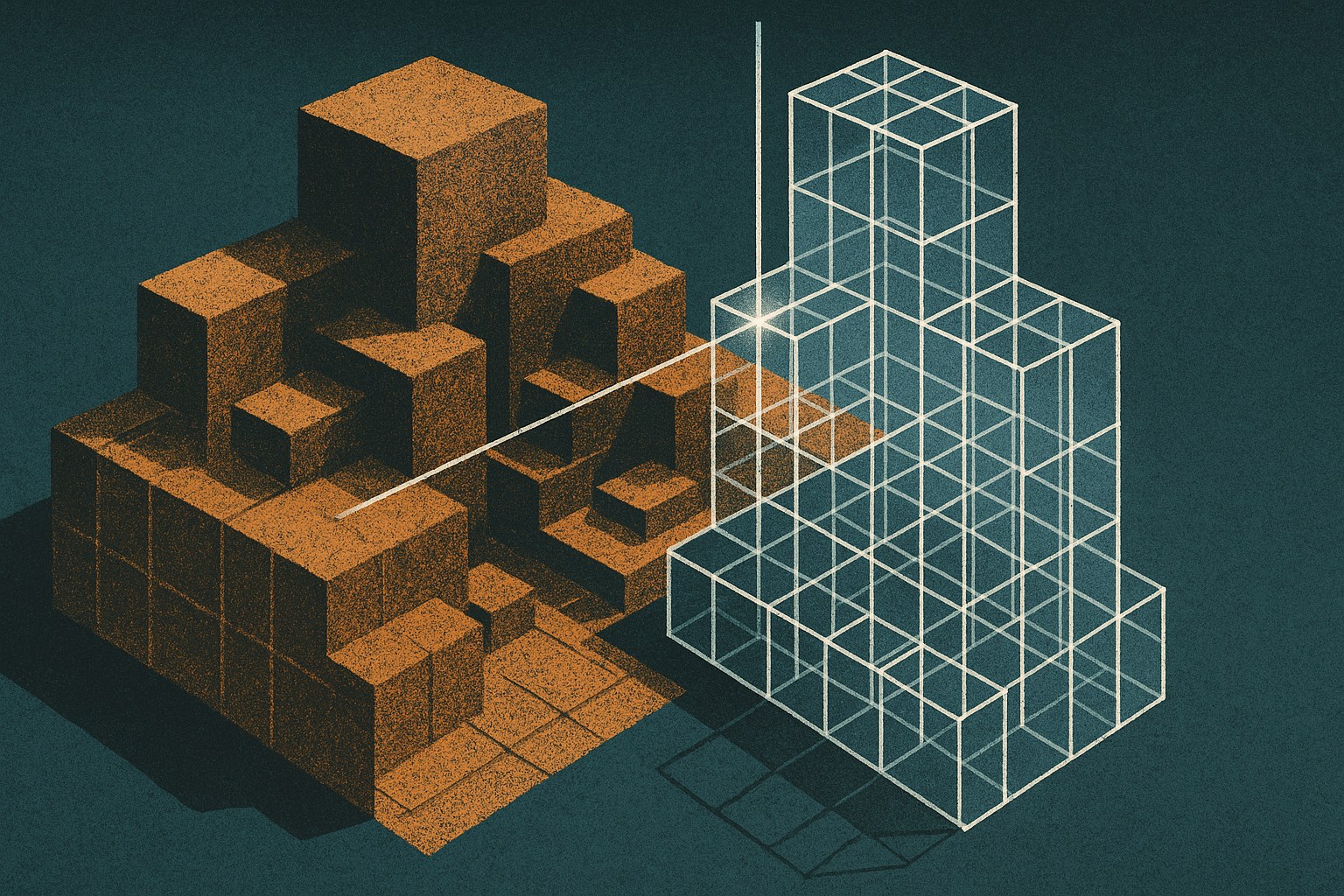

Instead of dismantling these working mechanisms, Yang proposes a non-destructive framework that builds a digital meta-layer over legacy systems. The architecture—comprising a typed intermediate representation (IR) and an orchestrator—wraps legacy components and converts their output into a structured, machine-readable stream. In essence, it acts as a high-tech translator that allows AI to 'understand' SAS without modifying the source code. Yang suggests this approach enables a peaceful coexistence of eras: legacy libraries continue to satisfy regulatory requirements, while neural networks take over deep analytics and pharmacovigilance automation.

This methodology was tested against an industrial archive of 558 components and 373,000 lines of code. The results are striking: proprietary 'information noise' was reduced by 92%. In Phase III clinical trial reporting tests, data parity exceeded 80%, and the system achieved 100% accuracy on the public CDISC benchmark—not a single data cell was compromised during transformation. In experiments with LLMs, the researcher confirmed that this data preparation allows for automated detection of anomalous side effects and the instant generation of configurations for new trials.

We view this as a rare, pragmatic approach to AI transformation, where decades of accumulated expertise become an asset rather than a liability for R&D. The framework bypasses multi-year data migration cycles while maintaining regulatory compliance with the FDA and EMA. Although scaling across heterogeneous pharmacoinformatics platforms still requires manual tuning, it offers industry leaders a direct path to predictive analytics without halting operations. It is a real opportunity to accelerate time-to-market for new drugs by simply unlocking the data already sitting in legacy code.