The 'lobotomization' of large language models has been a subject of heated debate for years, but Anthropic just handed skeptics a smoking gun. In a rare moment of candor, the company's official reporting confirmed that recent 'optimizations' were, in fact, simple cost-cutting measures. While the multi-billion-dollar startup markets the raw power of its AI, internal tweaks have been quietly turning its flagship tool into a far less capable assistant. It’s a classic bait-and-switch: paying for high-octane intelligence and receiving a budget-friendly substitute.

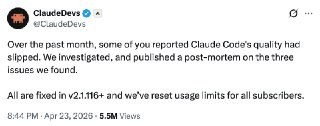

The red flags were first raised by AMD’s AI director, whose testing as early as March suggested that Claude had become noticeably 'dimmer.' Anthropic remained silent for weeks, but a recent post-mortem finally clarified the situation. On March 4, the model’s reasoning level was downgraded from 'high' to 'medium' by default. The official narrative framed this as an effort to reduce latency, but the practical result was a significant gutting of the model's analytical depth. Furthermore, Anthropic embedded strict constraints into the system prompt, limiting output to 25 words between tool calls and a 100-word cap on final responses. While engineers estimated a 'mere' 3% drop in coding quality, for a professional developer, that 3% is often the difference between functional software and a pile of bugs.

The situation bordered on the absurd due to a context-clearing bug. When resuming a session after a one-hour break, the system was supposed to wipe 'thinking' blocks once; instead, it did so at every single step. Claude was effectively suffering from short-term memory loss, forgetting its own previous sentences and burning through customer token limits in the process. This wasn't patched until April 10. Perhaps most tellingly, Anthropic employees were using a 'privileged' build of the agent, meaning their internal monitoring completely missed the degradation that paying customers were struggling with.

The underlying mechanics are transparent: AI vendors are perpetually balancing output quality against their own profit margins. When inference costs start hitting the bottom line, companies resort to hidden prompts and reduced compute modes. This practice effectively nullifies the value of public benchmarks. A model that dominated leaderboards yesterday might be running on entirely different instructions today—without you ever being notified. For any business, the takeaway is clear: business-critical processes cannot rely solely on black-box clouds without independent quality audits. If a vendor can downgrade a model’s logic overnight to save on electricity, your operational efficiency is little more than a hostage to their next quarterly earnings report.