Modern AI can effortlessly write complex Python code, yet it still struggles with elementary physical tasks—like folding a t-shirt or assembling a fragile circuit board. The bottleneck isn't a lack of compute or terabytes of video; it is a chronic "sensory hunger" within the Embodied AI segment. For too long, the industry has ignored the physics of touch. While most players remain fixated on Vision-Language-Action (VLA) models, Hong Kong-based DAIMON Robotics is betting on a new level of multimodality: Vision-Tactile-Language-Action (VTLA). The release of the Daimon-Infinity dataset isn't just another academic attempt to teach a robot to serve coffee; it is a strategic move to establish a gold standard for high-precision assembly and service.

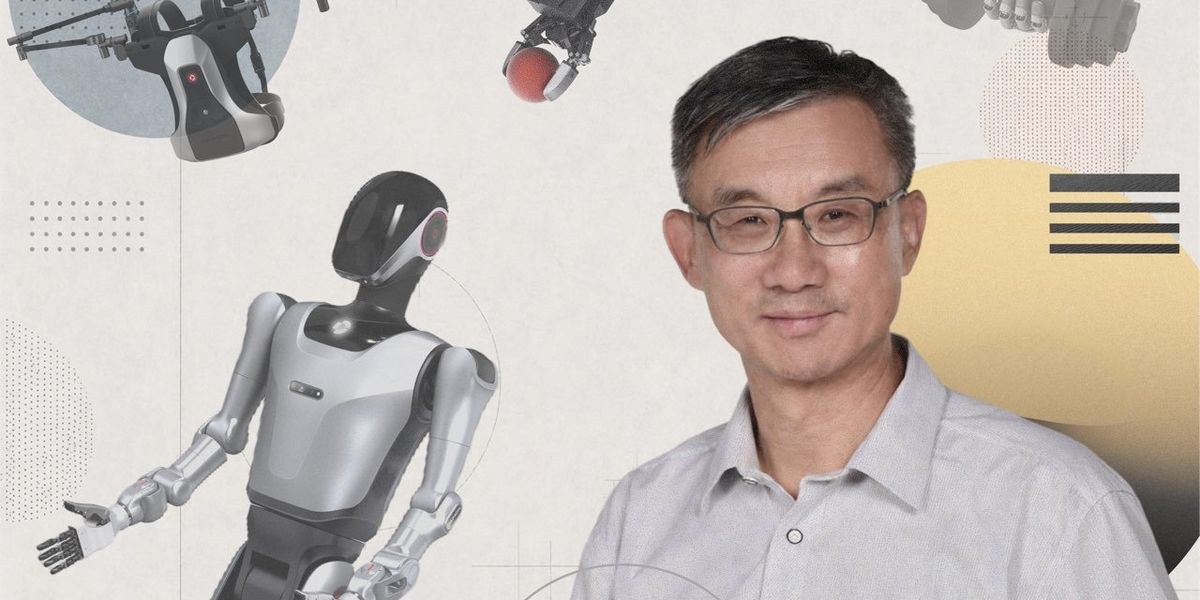

Behind this ambitious project is Professor Michael Yu Wang, co-founder of DAIMON Robotics and a Carnegie Mellon alumnus. He argues that a robot relying solely on cameras remains "numb" to the nuances of the physical world. To bridge this gap, DAIMON developed a tactile module integrating 110,000 sensing units into a device the size of a human fingertip. This hardware captures deformation, slippage, friction, and surface texture—data points essentially invisible to standard computer vision. We are witnessing a transition from crude motor skills to surgical dexterity: robots finally have the chance to learn how to handle a fragile glass with the same calibrated pressure as a human.

The economics of this dataset deserve scrutiny. DAIMON has open-sourced 10,000 hours of data across 80 different scenarios. The involvement of Google DeepMind and leading universities from Singapore and the U.S. sends a clear signal to the market: raw video is no longer enough to build universal foundation models for robotics. For CTOs and investors, this means the automation threshold for unstructured environments—from flexible manufacturing lines to hospitality—is dropping rapidly. In China, these systems are already hitting the ground in hotels and shops, proving that tactile data is the primary fuel for robots interacting with the human world.

Investing in vision-only robotics today is increasingly looking like a dead-end strategy. The Daimon-Infinity release proves that competitive advantage has shifted from what a machine sees to what it feels. If your automation roadmap ignores high-resolution tactile feedback, you are effectively hiring a blind employee and asking them to perform surgery.