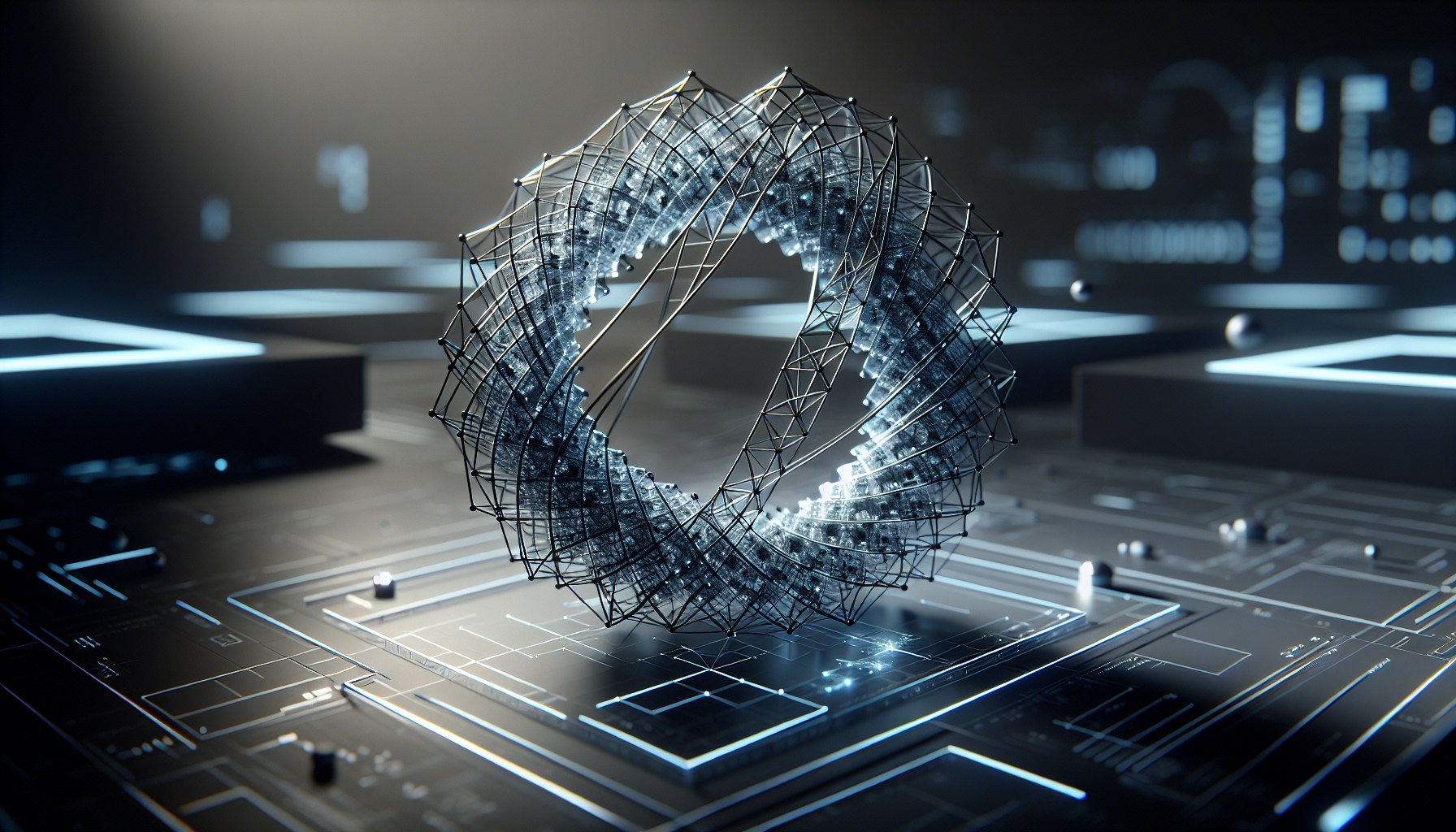

Researchers at Goodfire have finally dismantled the myth of neural networks as impenetrable 'black boxes' filled with numerical noise. Their findings reveal that the internal activation space is not a chaotic cloud of data, but rather a collection of rigorous geometric forms: multidimensional rings, spheres, and complex topological surfaces. While the market discusses 'hallucinations' as if they were mystical glitches, Goodfire demonstrates that we are dealing with a sophisticated engineering object where any error is simply a defect in the data topology.

Key examples include cyclical entities like days of the week or temporal cycles. Inside the model, 'Monday' and 'Tuesday' do not float in a vacuum or sit in a flat list. They form a closed circular trajectory. If you try to navigate from Monday to Friday in a straight line, you get nothing but gibberish. An accurate result is only possible by moving along an arc, changing the activation angle. Concepts of color, numbers, and even biological taxonomy are packed in a similarly nonlinear fashion. This applies not just to LLMs, but to vision and world models as well, proving a universal phenomenon: neural networks literally 'see' the world’s structure through geometry.

For the business world, this discovery signals the end of the 'square wheel' era. Until now, the industry has attempted to control models through linear weight adjustments—a mathematically absurd approach when dealing with curvilinear structures. Understanding neural geometry provides the theoretical framework for nonlinear 'steering.' Instead of guessing why an agent lost its logical thread, we now have a map where any failure is visible as a departure from the trajectory. This is a direct path toward deterministic AI, where decisions can be geometrically verified.

Naturally, industrial-scale implementation in corporate software is still on the horizon; the toolkit for real-time 'semantic navigation' has yet to be built. Questions also remain regarding scalability: how complex these shapes become in trillion-parameter models, and what manifolds look like for abstract concepts with no natural counterparts. Nevertheless, the paradigm has shifted. AI is no longer a matter of reading tea leaves; it has become a discipline of high-level mathematics and precise maneuvering within activation space.