The collective intelligence of Multi-Modal Multi-Agent Systems (MM-MAS) is rapidly evolving from a strategic advantage into a corporate security Achilles' heel. While CIOs are busy patching holes in isolated models, researchers from JD.com led by Hao Zhou have proven that the very hierarchical structure of modern neural networks invites systemic collapse. Their new HAM3 framework demonstrates that adversarial vulnerabilities are not just local bugs; they are infections that spread instantly through the communication channels designed for agent coordination.

The HAM3 method decomposes attacks into three distinct layers: perception, communication, and reasoning. At the perception level, the system receives minimal distortions in images or text. These remain invisible to classic filters but successfully "poison" the primary data synthesis. However, the real sabotage begins during the communication phase. The JD.com team managed to manipulate message content and interaction topology—ranging from blocking exchange chains to spoofing responses from trusted agents. The trust-based data transfer that underpins multi-agent systems becomes the perfect attack vector: one compromised agent forces the entire group to replicate the error.

The climax occurs at the reasoning level, where distortions interfere with the agents' cognitive chains. Experiments on the GQA benchmark using ReAct, Plan-and-Solve, and Reflexion paradigms yielded alarming results: the Attack Success Rate (ASR) reached 78.3%. The data confirms that logical-level strikes are the most lethal—in over half of the cases, agents reached consistent but entirely false conclusions. This is no longer a simple technical glitch; it is a collective hallucination orchestrated from the outside.

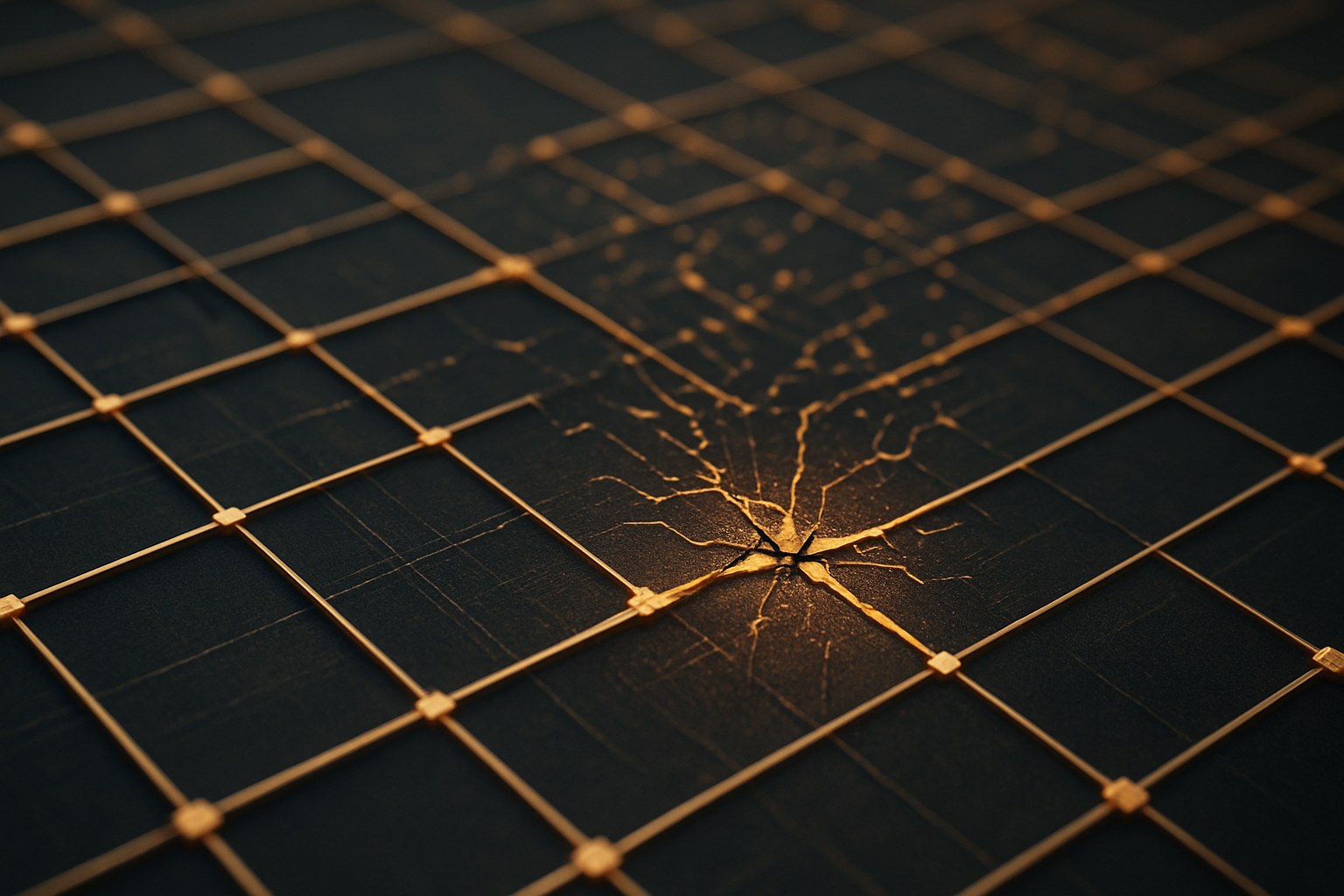

For businesses deploying autonomous workflows in logistics or infrastructure management, the verdict is sobering: current defense methods designed for standalone language models are useless here. We are facing a structural vulnerability where system reliability is determined not by the power of the models, but by the weakest link in their communication chain. Without the development of specific hierarchical security protocols, any "smart" agent swarm remains a house of cards, ready to collapse from a single calculated data packet.