A recent Twitter dispute surrounding Hugging Face's Open LLM Leaderboard resembled a petty squabble more than an analytical discussion. The unexpected ascent of Falcon and a corresponding drop in LLaMA's performance on the key MMLU benchmark triggered understandable consternation. The creators of the leaderboard eventually offered an explanation, revealing that behind the seemingly straightforward scores lay a complex set of issues that call into question the leaderboard's value for those making real business decisions. The problem, they noted, is not with the models themselves, but with how they are being measured.

It has become clear that a single, universally accepted method for evaluating MMLU does not exist. Hugging Face relies on its own LM Evaluation Harness library for its rankings. LLaMA, however, was found to be using a modified version of the code developed by the original benchmark authors at UC Berkeley. Stanford's HELM initiative, with its distinct methodology, also operates independently. When different tools and configurations are applied to the same evaluation task, divergent results are an inevitable outcome. Business leaders who focus solely on the top ranks of leaderboards without understanding the underlying evaluation 'ingredients' risk selecting a model that may not perform as effectively in real-world applications as its score suggests.

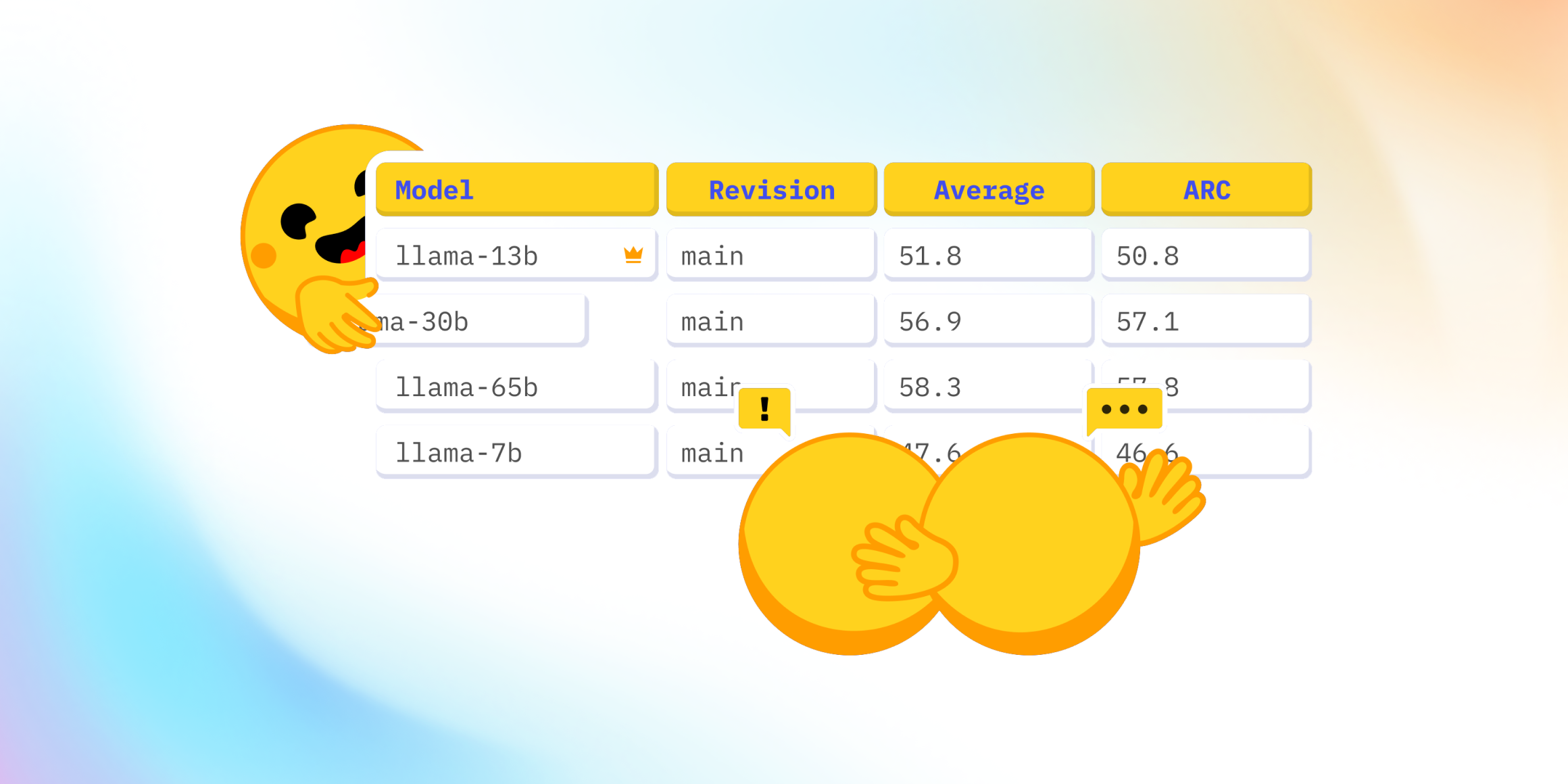

These kinds of discrepancies, exemplified by the LLaMA situation, erode trust in any ranking system. For businesses considering Large Language Models (LLMs) as tools to solve specific problems, the marketing spin is less important than a clear understanding of precisely how a model's performance is being measured. If differences in MMLU scores can arise from a simple change in configuration, a different code version, or, as in LLaMA's case, a 'custom implementation,' then business leaders are left wondering which numbers to rely on. Making LLM adoption decisions based on such data is akin to playing Russian roulette with a company's budget.

Why this matters: It is time to move beyond blindly accepting the impressive figures presented in leaderboards. True competitive advantage lies in understanding the methodology behind the results. You should ask vendors specific questions: Which particular MMLU sub-benchmarks were used? How were errors handled? Do they have proprietary internal benchmarks that reflect your specific operational needs? It is also crucial to verify the reproducibility of reported results. You should implement LLMs only after testing them in your own controlled environment, rather than relying solely on external benchmarks. Failure to do so risks acquiring a 'champion' model that proves to be little more than an expensive calculator.