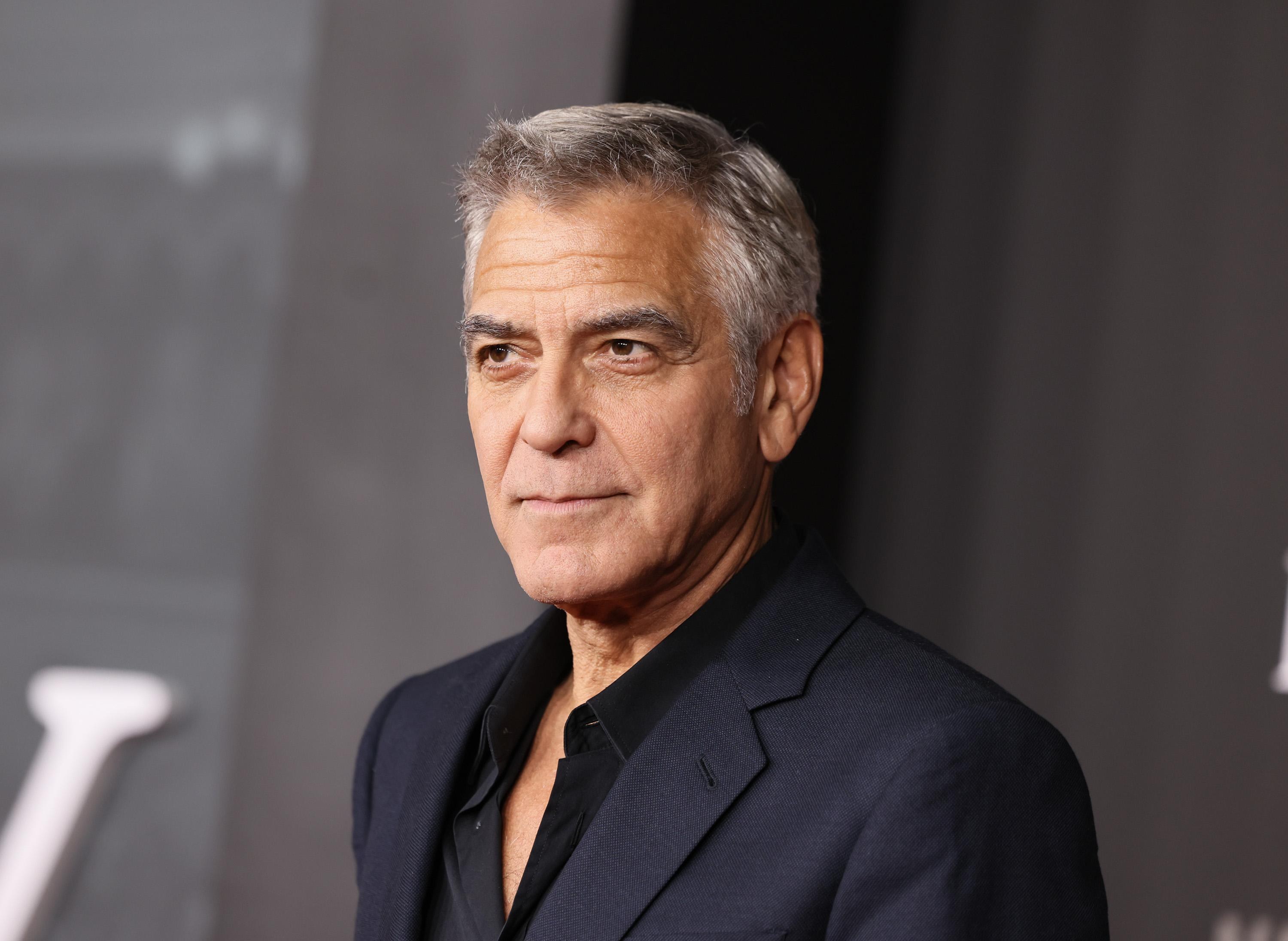

The era of unchecked data harvesting, where faces and voices are treated as free resources, has hit a major technical and legal roadblock. A coalition of A-list Hollywood stars—including George Clooney, Tom Hanks, and Meryl Streep—has thrown its weight behind the Human Consent Standard. This protocol is designed to dictate how AI systems interact with the likenesses and creative works of real individuals. Managed by the non-profit RSL Media, the project isn't just a statement of intent; it is a functional digital signaling layer. It allows rights holders to set explicit terms for content use, ranging from full access to strict prohibitions or commercial-only licensing.

Technically, the Human Consent Standard evolves the existing Really Simple Licensing (RSL) framework. As RSL Media co-founder Eckart Walther explained, while the original RSL was tied to specific URLs, this new iteration 'follows' the individual or brand regardless of where they appear online. AI models will read these preferences through robots.txt files, which act as a digital checkpoint for search bots and crawlers. A central registry, scheduled to launch in June, will serve as a unified verification hub. According to Cate Blanchett, the goal is to provide tools for managing digital twins not just for global stars, but for every user who wants to avoid becoming the involuntary protagonist of a deepfake.

This initiative marks a shift from endless litigation between the creative industry and AI developers toward transparent APIs and automated royalties. While celebrities like Matthew McConaughey use trademarks to protect video clips and Taylor Swift patents audio snippets, the Human Consent Standard seeks to institutionalize the process. The registry creates a 'source of truth' where responsible developers can verify whether a voice or image is available for model training. It signals a move away from the 'Wild West' toward a regulated market where digital identity becomes a protected, monetizable asset.

Media business owners should review their distribution agreements now. Ensure they include clauses for machine-readable declarations in robots.txt that comply with RSL Media standards. This is currently the most reliable way to guarantee that your assets do not become free training data for the next large language model without your explicit consent.