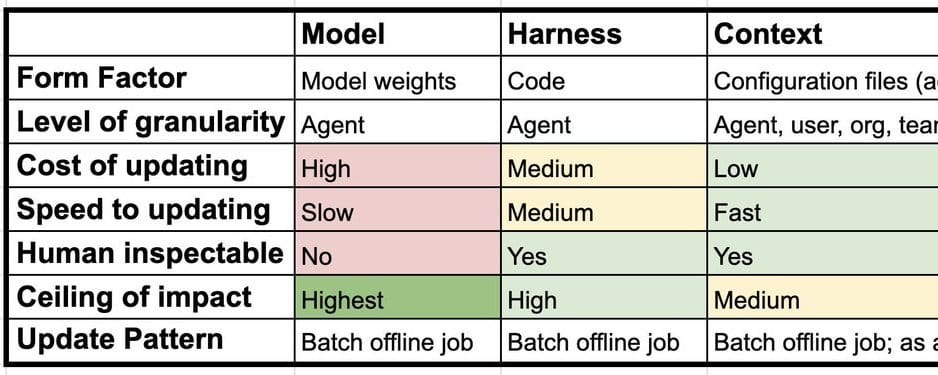

In the AI industry, where every release is hailed as a breakthrough, LangChain proposes a new perspective on training agents. Traditional continuous AI learning has often involved endless tweaking of model weights, a direct and guaranteed path to budget overruns and disappointment. LangChain developers argue this is merely the tip of the iceberg. Creating truly intelligent agents requires consideration of three levels: the model itself, its 'harness'—a metaphor for control code and instructions—and 'context,' encompassing external knowledge, memory, and skills.

The first level, the model with its weights, is the source of 'catastrophic forgetting' and expensive retraining rituals. The second level, the 'harness,' comprises the agent's control code, its instructions, and tools. Optimization of this layer is already gaining momentum, with third-party solutions analyzing logs and adjusting code. Finally, the third level is 'context': everything that exists outside the 'harness,' including external knowledge, memory, and skills. Flexible adjustment of all three layers, not just weights, promises more stable and cost-effective development of AI systems. It's akin to focusing on the patient's proper diet and lifestyle instead of performing endless organ transplants.

For businesses investing in AI agents, it is time to move beyond relying on the magic of weights. Instead, focus should shift to optimizing the control code ('harness') and effectively utilizing contextual data. This approach allows for the creation of systems that adapt faster, are cheaper in the long run, and demonstrate consistent performance. This is where the real ROI lies, not in another arms race of scaling weights.

This shift from weight-centric training to a multi-layered optimization strategy offers a pragmatic path for businesses to achieve tangible returns on their AI investments. By treating the agent's control logic and its access to information as critical components for improvement, companies can build more robust and adaptable AI solutions without the prohibitive costs and complexities associated with continuous model retraining. This represents a significant departure from the prevailing, often unsustainable, approach to AI development.