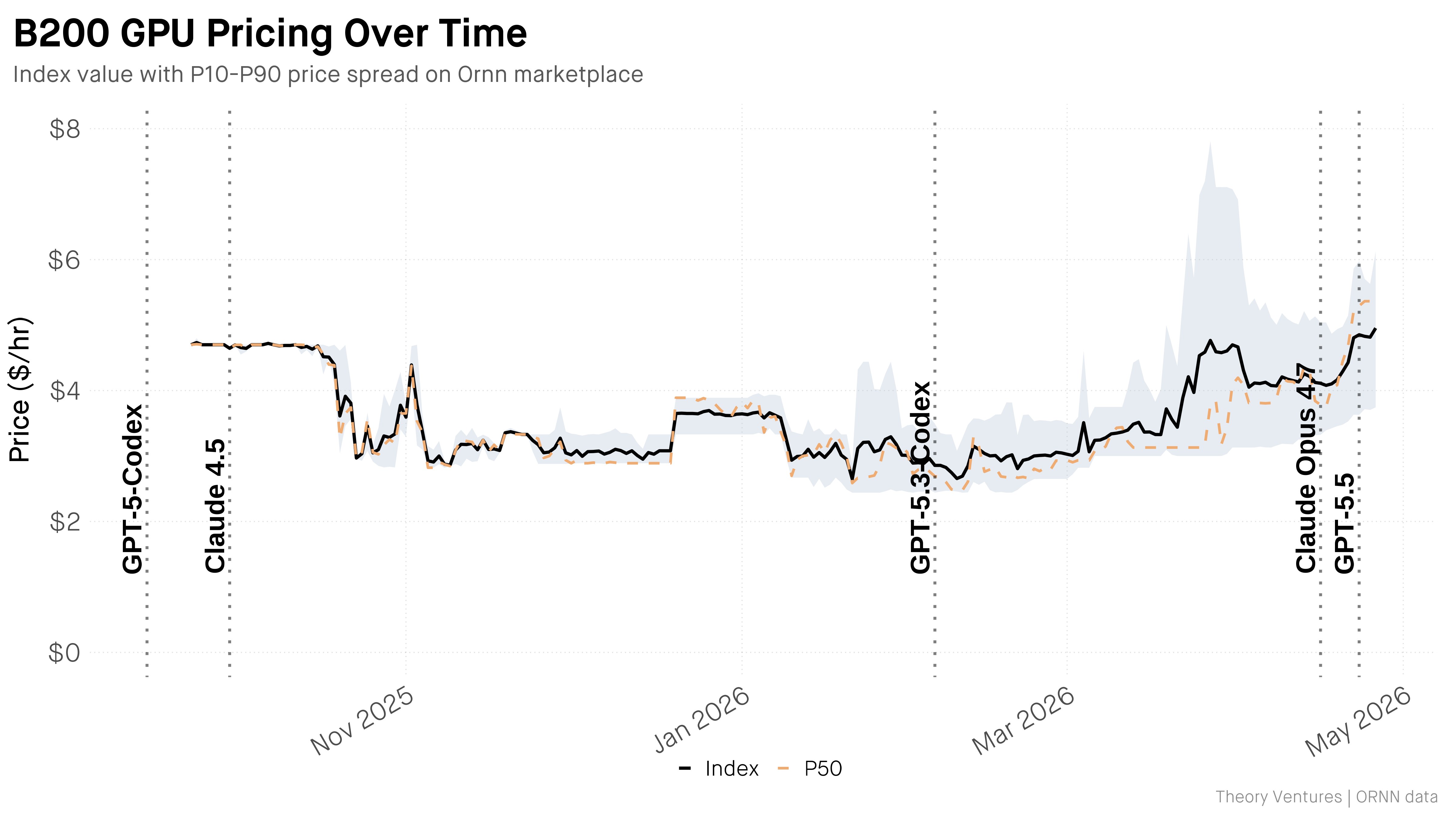

The era of affordable AI compute has collided with the harsh reality of supply shortages as the premium cloud capacity segment enters a period of extreme volatility. According to the Ornn Compute Index, the hourly rental cost for the NVIDIA B200 Blackwell has skyrocketed to $4.95 this week. This is no mere market fluctuation; it represents a 114% surge compared to March levels of $2.31. In just six weeks, the price gap between the cutting-edge Blackwell architecture and the previous Hopper generation (H200) has widened from a symbolic $0.28 to a substantial $1.80 per hour. As analyst Tom Tunguz notes, the uncertainty surrounding the GPU market is dissipating, revealing a sobering fact: the release of each new flagship model now triggers a demand shock that instantly rewrites the economics of AI deployment.

The correlation between major releases and the depletion of cloud resources appears frighteningly direct. Per the Ornn Compute Index, every significant launch since September 2025 has been accompanied by a price rally. The latest catalyst was the release of OpenAI's GPT-5.5. As Sam Altman explained during the presentation, the model's expanded context window requires memory volumes that only the Blackwell architecture can effectively support. This creates a technological trap: to run state-of-the-art models at industrial scale, companies are forced to compete on the Blackwell spot market. Essentially, the industry is facing an 'infrastructure tax' that has doubled in a month and a half. The current $1.80 generational gap serves as a clear signal of the H200's depreciation for those seeking maximum performance.

The provider landscape further complicates Total Cost of Ownership (TCO) calculations. Tunguz's data shows that the spread between the cheapest and most expensive market offers has become embarrassingly wide. While B200 prices were tightly clustered in September 2025, today’s variance has more than doubled. While some cloud platforms are implementing scarcity premiums, others are offering capacity at rates nearly identical to the older H200—likely AI startups offloading excess capacity or hyperscalers receiving new hardware batches. The spot market acts as a leading indicator here, typically forecasting price movements in long-term contracts with a 90-day lag.

With B200 prices unlikely to drop below $5.00 before the end of summer, the only rational paths forward for businesses are either investing in proprietary small-scale clusters or moving toward aggressive model distillation. The market anticipated that mass chip production and algorithmic optimization would trigger a deflationary spiral, making AI universally accessible. In reality, the launch of GPT-5.5 turned the Ornn Index chart into a vertical line, forcing companies to pay a 114% premium just for the right to maintain a full-sized context window. Instead of a cheap commodity, we have entered a survival auction.