OpenAI has officially moved beyond the standard chatbot format with the introduction of workspace agents powered by the Codex model. While the previous Custom GPTs often felt like experimental tools for one-off queries, these new agents function as full-fledged digital employees. Equipped with their own operational memory and direct access to file systems, they are capable of executing code in cloud environments without human supervision. We are witnessing a fundamental paradigm shift: the agent no longer waits for a user prompt; instead, it operates on a schedule or joins Slack discussions to push projects forward while the human team sleeps.

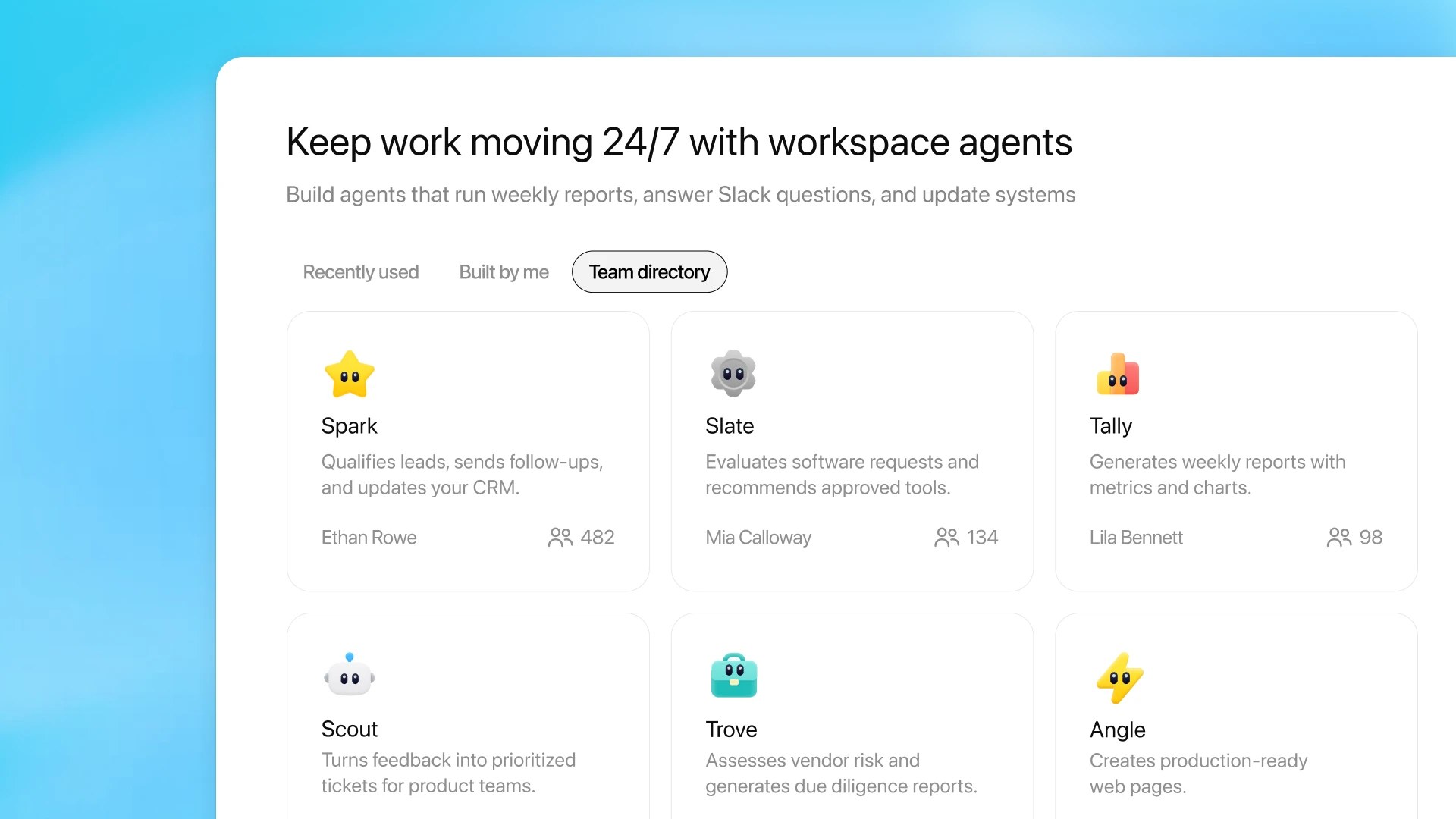

The core shift for the business world lies in the transition from conversation to action. OpenAI emphasizes that these agents can extract context from internal systems, request human approval at critical stages, and connect directly to third-party software. Internal testing has already demonstrated viable use cases: from an "IT Auditor" that verifies employee requests against corporate policies, to lead generation tools that autonomously qualify clients and draft emails within a CRM. For leadership, this introduces new levers of control—as part of the Research Preview for Business and Enterprise plans, role-based administration models are being implemented. Now, a CTO can strictly limit the toolsets available to each agent and define exactly who within the company is authorized to deploy these autonomous units into the live work environment.

From our perspective, we are seeing the sunset of the "prompt engineering" era. You are no longer training a chatbot; you are managing a fleet of autonomous specialists that bridge the gap between raw data and execution tools. For management, this requires a shift in focus: instead of meticulously refining query phrasing, leaders must now focus on workflow architecture, defining the boundaries of AI autonomy, and building security gateways for mission-critical corporate operations.