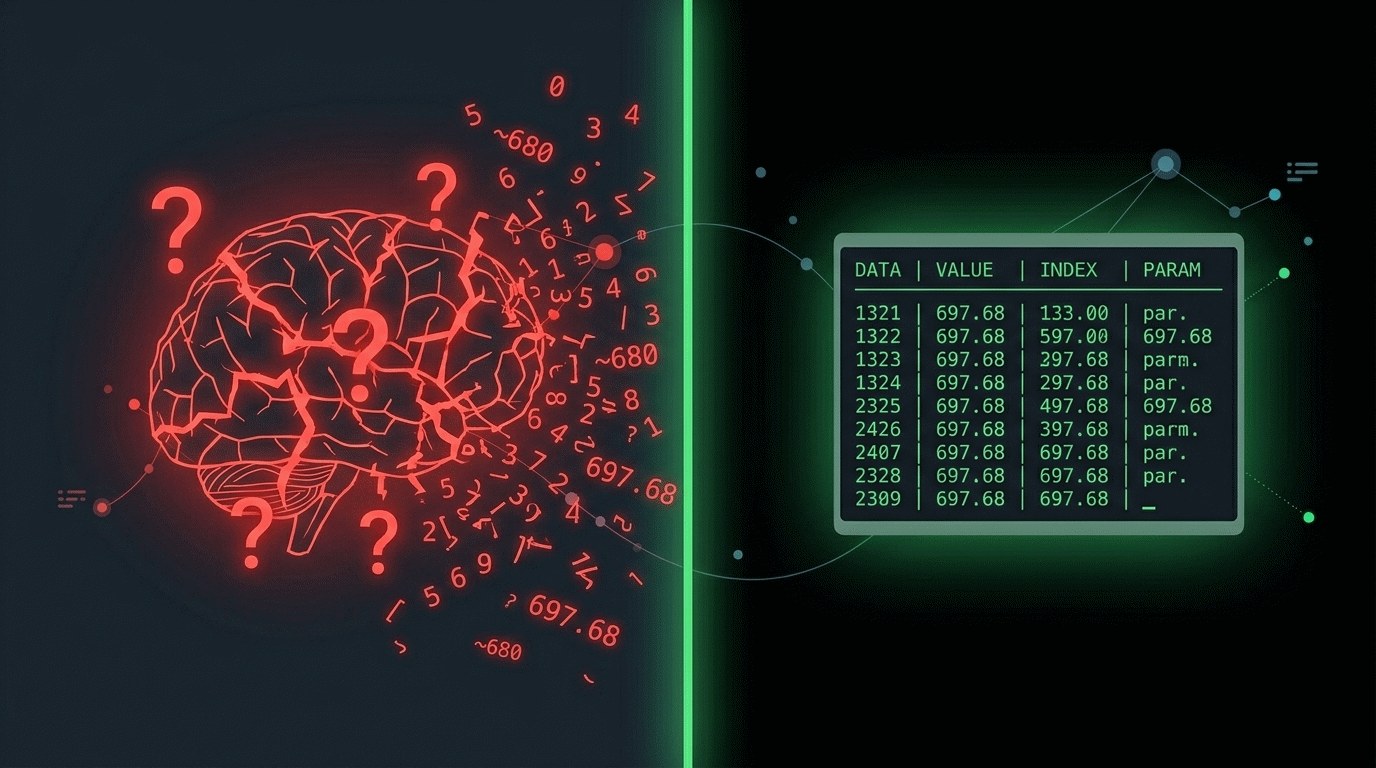

Traditional transformers predict the next token but they do not call a calculator. When asked "18 × 38.76" they return a string of characters, often off by 10–30 %. This is not a bug; it is a systemic limitation because the model was trained on text, not arithmetic. The solution is simple – stop forcing LLMs to compute directly and let them write a program that performs the calculation.

In a live product this approach already works. A user submits a task such as "Tomsk, HV 320…" through a messenger. The LLM generates a Python script, which runs inside an isolated Docker container, and returns an exact result together with an Excel report. Directly invoking a calculator guarantees that 18 × 38.76 equals exactly 697.68, not "about 700".

Integrating code generation cuts the development cycle for new functions by roughly 20 %. The generated code automates writing and testing of small modules, freeing developers from repetitive manual coding and re‑verification. Because the script is immediately executed in a sandbox, early‑release bugs drop significantly.

For business this opens safe automation scenarios for financial calculations, analytics, and customer services—from accounting bots to dynamic pricing offers. The key condition is strict control over the code execution environment and robust cybersecurity; otherwise the gains from precise computation can be erased by vulnerabilities.

Why this matters: A 20 % acceleration in development lets you launch new services faster and save team resources. Exact arithmetic reduces financial error risk, which is critical in accounting and analytics. Companies that replace guesswork with code‑generated calculations gain a competitive edge through reliability and speed.