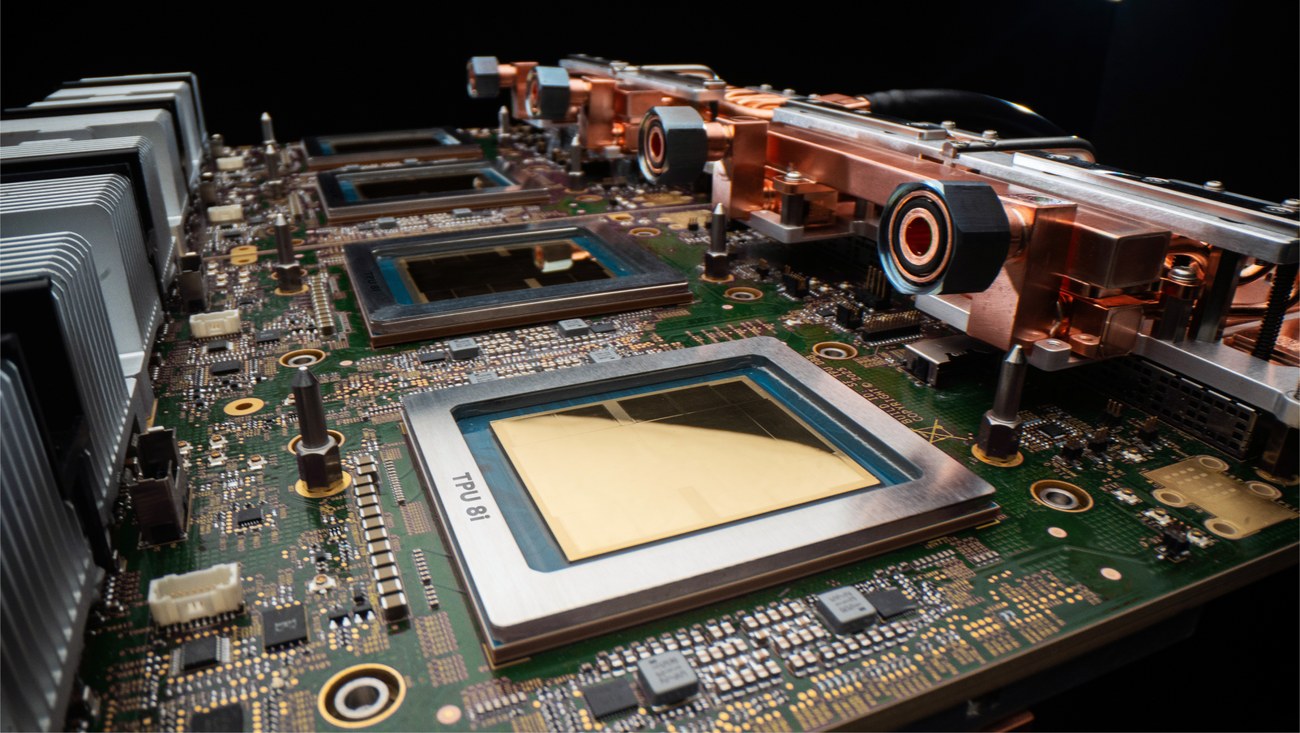

For years, the industry has whispered that general-purpose hardware simply cannot keep up with the voracious appetite of neural networks. At the Google Cloud Next conference, Mountain View finally made the divorce between model training and execution official with the launch of its eighth-generation Tensor Processing Units: the TPU v8. The headline here isn’t just a routine bump in teraflops; it’s the total abandonment of the "one-size-fits-all" silicon strategy. Google’s infrastructure is now bifurcated into specific product lifecycle phases: the TPU 8t (training) for heavy-duty model construction and the TPU 8i (inference) for daily operation. In short, Google has admitted that trying to sit on a single architectural chair is becoming too expensive for business.

Behind the "Agentic Era" marketing facade lies cold pragmatism. Modern autonomous agents are not just chatbots churning out text; they are systems locked in endless reasoning loops and multi-step workflows. This "thinking" creates a massive memory load and critical latencies that, at corporate scale, instantly translate into losses. If the TPU 8t is an industrial-scale dump truck boasting 121 exaflops to shrink training cycles from months to weeks, the 8i is the high-speed courier. Its memory bandwidth has been radically increased to ensure agents can "think" faster. From our perspective, Google isn't just selling transistors; it’s pitching a lower Total Cost of Ownership (TCO) through tight vertical integration, where Gemini is perfectly tailored to proprietary silicon.

The mechanics are clear: Google is building a closed ecosystem, throwing a direct gauntlet at NVIDIA’s dominance. While the rest of the market waits in line for the versatile H100, Google is designing supercomputers where software, networking, and chips function as a single organism. For enterprises, the signal is vital: the era of "one model for everything" is over, and efficiency now lives exclusively in specialization. The TPU 8t scales up to 9,600 chips in a single superpod and promises a 97% "goodput" rate by automatically rerouting data around failed nodes. It is an attempt to turn neural network training into a predictable assembly line rather than a lottery plagued by cluster crashes.

However, outside the corporate data centers, reality may be less polished. The concept of an "agent swarm" seamlessly solving business tasks on specialized 8i chips still feels more like a slick keynote demo than a ready-to-deploy field tool. To realize the promised savings and speed, companies must move entirely into Google’s cloud and play by their rules. While heavyweights like Citadel Securities are already voting for this hardware with their budgets, for mid-sized businesses, this strategy implies total vendor lock-in. Then again, when the alternative is waiting six months for a GPU shipment, the choice between freedom and performance becomes purely academic.