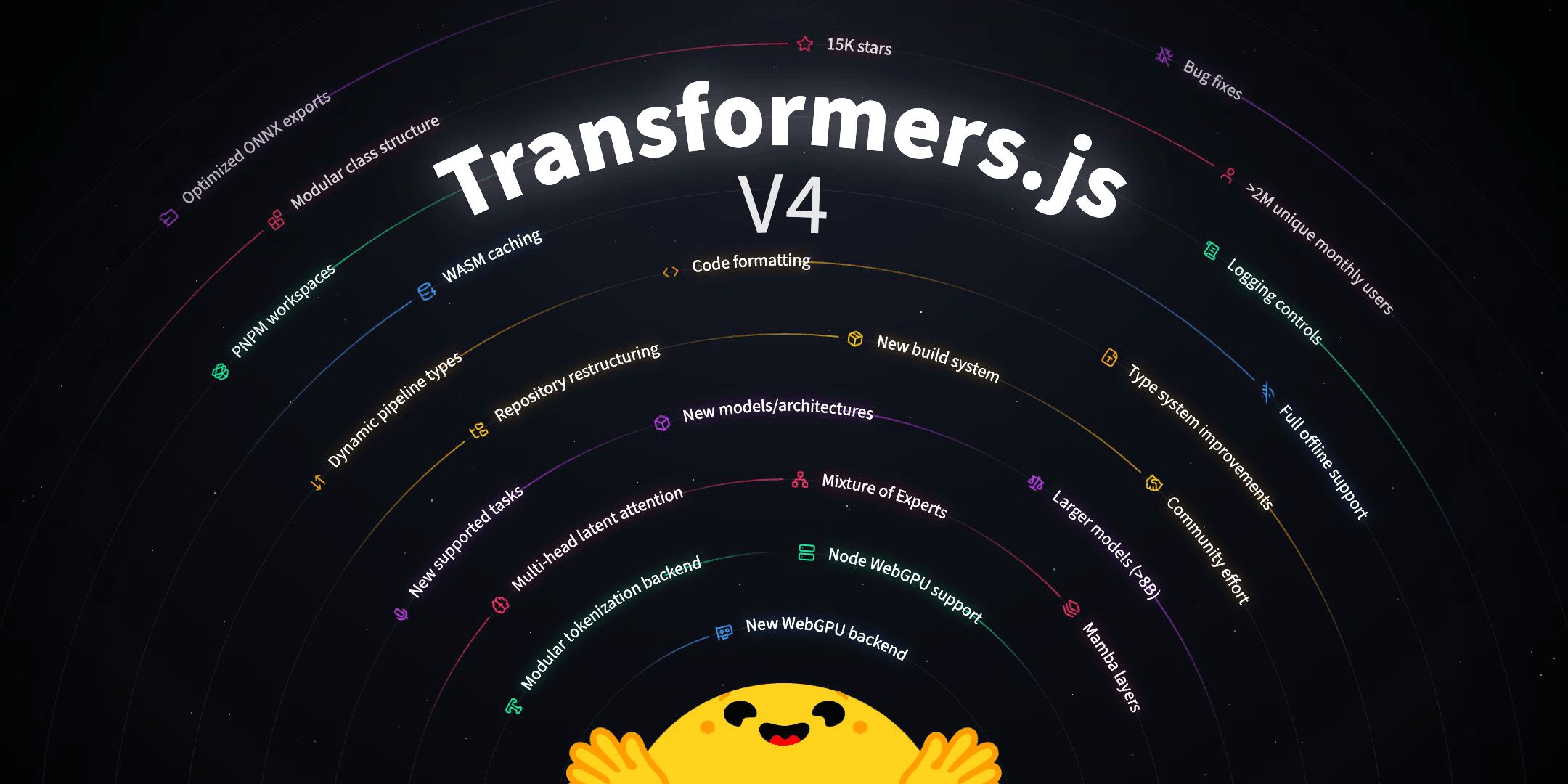

Hugging Face appears to be challenging cloud providers with the release of version 4 of its JavaScript framework, Transformers.js. The company has effectively shifted a significant portion of AI computation from expensive data centers directly into users' browsers. Developers can now forgo substantial spending on server rentals by simply running `npm i @huggingface/transformers@next`, transferring the computational load to client devices. This move offers a direct path to independence from cloud infrastructure providers and, consequently, tangible budget savings.

The core innovation is a new WebGPU Runtime built in C++. This runtime is optimized for accelerating approximately two hundred AI models. When paired with ONNX Runtime, it delivers impressive performance. For instance, Hugging Face reported a four-fold speed increase for a BERT-based embedding model by replacing just one component. These models can now operate not only in web browsers but also on servers running Node.js, Bun, and Deno, leveraging WASM caching for offline functionality. This suggests that real performance gains for web applications are becoming a tangible reality, moving beyond mere marketing claims.

The transition to a monorepo structure using PNPM Workspaces is also strategic. The objective is to create more compact and specialized packages. This translates to potentially reduced application sizes, faster loading times, and lower resource consumption on client devices. In simpler terms, AI functionalities will become lighter and quicker, unlocking new possibilities for their application and broader adoption.

This development is significant because migrating AI computations to the browser via Transformers.js v4 and the WebGPU Runtime democratizes access to advanced AI. Complex AI functions are becoming not only more accessible but also more economically viable. Hugging Face indicates that savings on server infrastructure could reach 15-20% for applications with substantial AI workloads. The ease of installation through NPM removes a significant barrier to integration. Even small teams or ambitious startups can now implement cutting-edge AI solutions without requiring massive cloud resource budgets. This opens the door for those previously priced out of advanced AI deployment.