Hugging Face has introduced a significant advancement, Ulysses Sequence Parallelism, enabling large language models (LLMs) to be trained on contexts up to one million tokens. This breakthrough means AI can now process entire books or analyze multiple lengthy documents simultaneously, overcoming previous GPU memory limitations. Previously, standard transformers struggled with sequences beyond tens of thousands of tokens due to a quadratic increase in computational resources. Even optimizations like FlashAttention did not fully resolve this bottleneck.

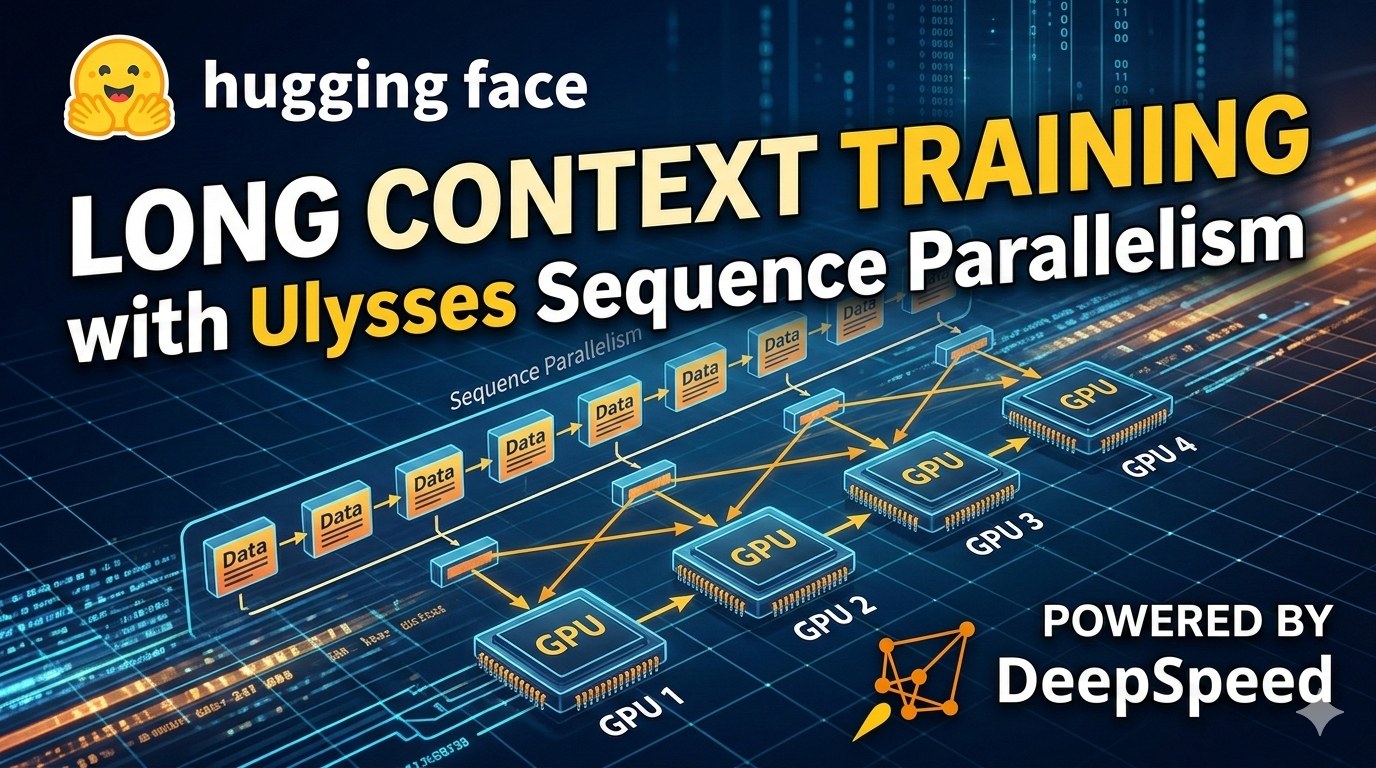

The new development, integrated into the Hugging Face ecosystem, distributes computational load across numerous GPUs. Hugging Face, in collaboration with researchers from Snowflake AI, built upon the Arctic Long Sequence Training (ALST) protocol to implement Ulysses Sequence Parallelism. The core principle involves partitioning the token sequence processing across multiple accelerators, with each GPU handling only a segment of the information. This approach is crucial for tasks such as in-depth analysis of extensive legal documents or scientific papers, understanding complex codebases that rely on multi-file context, and enhancing Retrieval-Augmented Generation (RAG) systems that manage large volumes of retrieved data. The conventional method, where each GPU processed the entire sequence independently, proved insufficient for these demands.

This technology moves beyond mere technical refinement; it paves the way for novel business applications previously out of reach for AI. Imagine AI systems capable of automatically analyzing contracts, grasping all nuances and interconnections within a document, or systems that can comprehend entire sections of technical documentation for rapid information retrieval. For companies dealing with vast datasets, this offers an opportunity for deeper insights and significant reductions in training costs, making powerful LLMs more accessible. When a model can handle book-length contexts, its comprehension approaches human levels, moving beyond simple keyword matching.

This development means businesses can now leverage AI for tasks requiring an understanding of extensive textual information, such as comprehensive contract review or in-depth market research analysis. The ability to process million-token contexts dramatically expands the scope of solvable problems, potentially reshaping how industries approach data analysis and knowledge management. Companies that adopt these advanced LLM capabilities early will likely gain a competitive edge in extracting value from complex information landscapes.