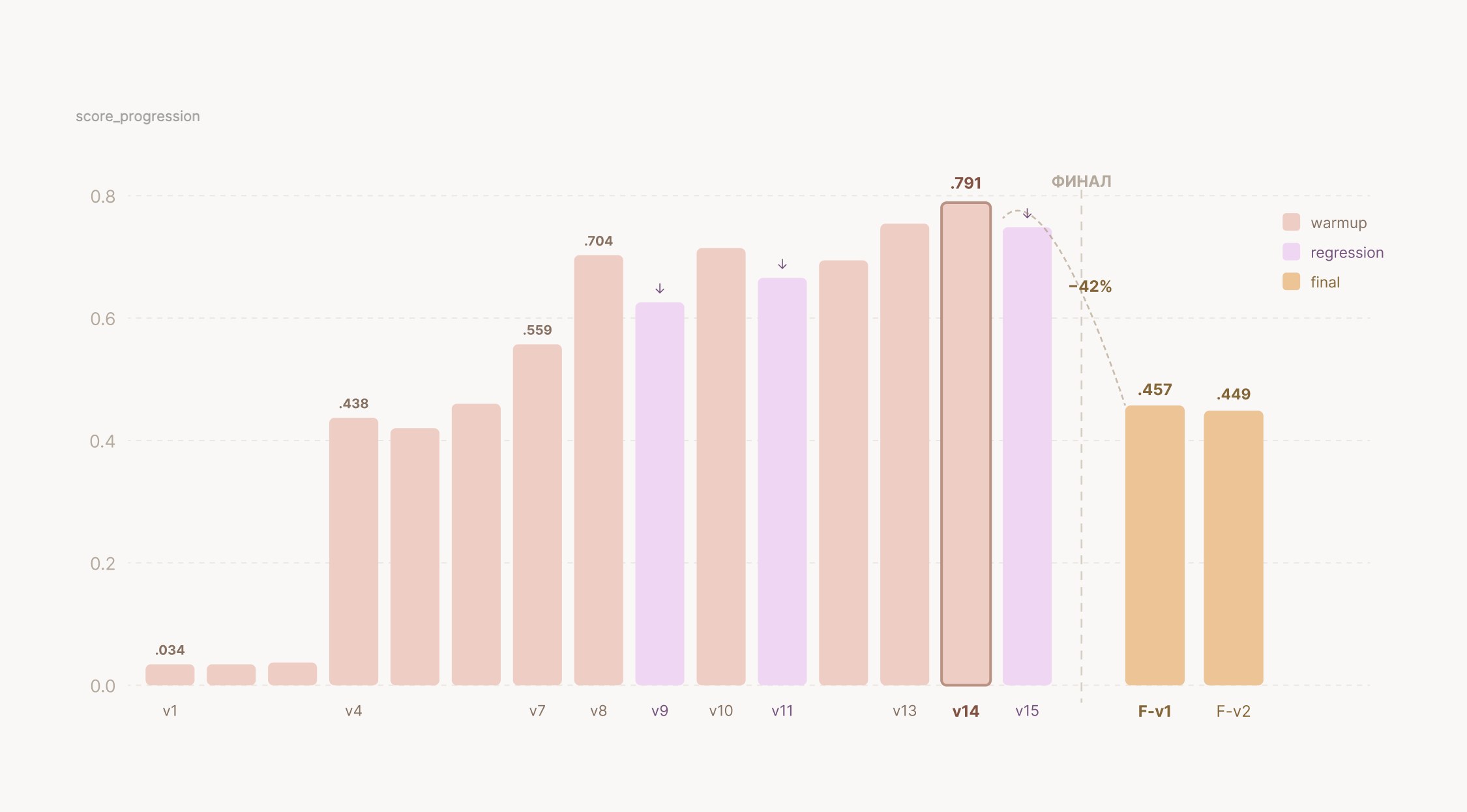

Rapid development of a Legal Retrieval‑Augmented Generation (RAG) system under the ARDL 2026 program proved that automating search across legal documents can cut expert review costs by as much as 30 percent even in pilot projects. A challenge participant built a solution from scratch in five days, raising the F‑beta score (β=2.5) to 0.791 by using the Claude Code LLM assistant and a custom answer‑extraction pipeline with citations. At the start of the effort, effectiveness measured only 0.034 – a stark illustration of how each of the 17 iterations improved text linking quality and model evaluation across five criteria.

When the document volume grew from dozens to hundreds, the system collapsed dramatically: processing 300 files caused roughly a 42 percent drop in overall quality. The decline appeared in link‑precision and in the S_det and S_asst metrics, and the final Total score fell according to the defined formula. The root cause was rising computational load, the need for fine‑tuned indexing, and escalating expenses for engineering quality control – costs that are often omitted from ROI calculations.

To make Legal RAG a genuinely useful tool for compliance departments, you must combine LLM assistants (Claude Code in this example) with custom F‑beta metrics and an iterative pipeline where each cycle includes retraining the retriever, validating page‑level linking, and monitoring telemetry. Budget planning should cover not only model licensing but also salaries for data engineers, DevOps support, and ongoing quality audits; otherwise, scaling document volume will turn promised savings into hidden losses.

Why this matters: companies that factor in hidden infrastructure costs will preserve the advertised up to 30 percent saving and avoid more than a 40 percent quality drop when they scale. This shifts legal AI from a "quick pilot" mindset to a sustainable system where efficiency is measured by both cost reduction and the stability of accurate legal conclusions.