Modern business has become dangerously dependent on cloud computing, paying a premium for massive computing power where it simply isn't needed. A recent experiment by venture capitalist Tom Tunguz brings the reality of the situation into sharp focus: after tracking 1,476 AI requests over five weeks, he discovered that exactly half of all professional tasks do not require massive models with trillions of parameters. The workload is dominated by routine operations: meeting scheduling accounted for 17.2%, while market research took up 13%. When 50% of your workday consists of text summarization, administration, and basic engineering, sending that data to the cloud is like driving a mining dump truck to the grocery store.

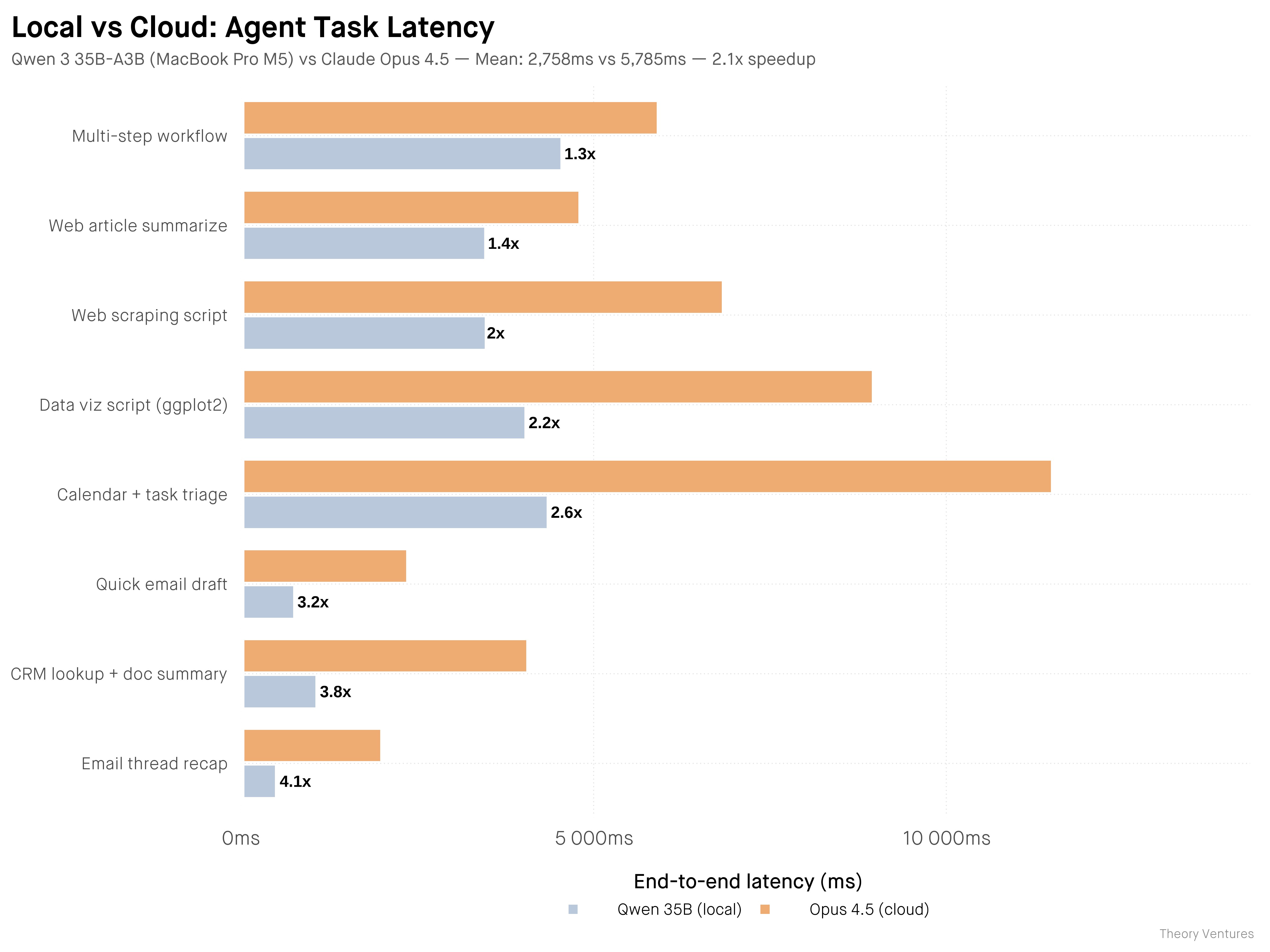

The economics of switching to Small Language Models (SLMs) are purely pragmatic: it’s about ditching the "token tax" in favor of maximizing your own hardware. As Tunguz points out, your M-series MacBook Pro depreciates every day whether you use its Neural Engine or not. Running local models allows you to extract value from an asset that would otherwise sit idle. A head-to-head comparison between a local Qwen 35B model and Claude via API showed that the on-device solution was twice as fast. While top-tier models like Opus 4.5 still outperform local rivals in logic by about 20%, the excessive "eloquence" of cloud giants is often a waste of time and money for agentic tasks where brevity and data transfer speed are paramount.

In this context, data security isn't just a marketing slogan; it's a baseline requirement. Local inference is the only way to handle sensitive information—M&A deals, competitive intelligence, or internal transcripts—without the risk of your secrets leaking into the training sets of Big Tech. While the industry spent years convincing us that intelligence only exists in massive server clusters, reality has proven otherwise. Open-source solutions like Llama or Mistral have narrowed the gap with flagship models to just a few months.

For CIOs and business owners, 2024 marks a paradigm shift. AI budgets should transition from endless cloud subscriptions toward developing in-house infrastructure and optimizing small models for specific industry tasks. We are witnessing the end of the era of the universal giant. The future belongs to specialized stacks running within the corporate perimeter. Half of your workload can and should be handled by the device already sitting on your desk—faster, more privately, and, most importantly, for free.